|

These systems can implement the distributed data processing operations, so that to support large volumes of incoming data and to fulfill high speed of data delivery. Some systems focused on batch (offline) data processing, while other systems and services can handle the real-time (online) data processing, such as Storm, Spark Streaming, Kafka Streams, and Apache, which attract more attentions due to the users’ demands on its ability of rapid and smart responding to the incoming data (Zaharia et al., 2012). For processing large graphs, special purpose systems for distributed computing based on the data-flow approach were introduced (Gonzalez et al., 2014). Initializing a state-of-the-art data workflow architecture design that can be used in the industrial applications.ĭue to the fast revolutionary of information technologies and systems, avalanche-like growth of data has prompted the emergence of new models and technical methods for distributed data processing, including MapReduce, Dryad, Spark (Khan et al., 2014). Illustrating the major functional components of the proposed system architecture. Investigating most recent big data tools for constructing the novel data workflow architecture. With data persistent in HDFS/ADLS, downstream system can choose either data visualization tool or API to access data. Apache Spark has been used for the data computer process scaling with standardizing data in the data warehousing procedure.

The proposed data pipeline starts at the standard data processing workflow using Apache Airflow, using GitLab for source code control to facilitate peer code review, and uses CI/CD for continuous integration and deployment. Motivated by the current demand in big data analytics and industrial applications, this chapter is proposed to illustrate and investigate a novel sustainable big data processing pipeline using a variety of big data tools. In the next step of data mining tasks, data could be processed to perform study analytics for end users.

The upstream vendor data will be ingested into a data lake, where source data is maintained and gone through the data processing of cleaning, scrubbing, normalization and data insight extraction. The sustainable automation can consolidate all tasks of ETL, data warehousing, and analytics on one technology platform.

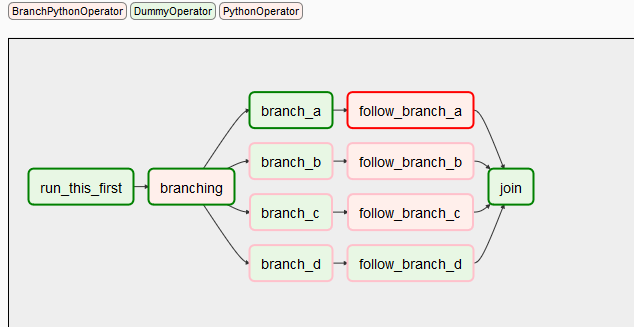

In this definition file, a collection is included for developers to run and sectionalized by relationships and dependencies.

To operate each job, a directed acyclic graph (DAG) definition file is required. It can handle either interdependent or independent tasks. Airflow is a framework that can conduct the various job of executing, scheduling, distributing, and monitoring. In 2016, Airflow became affiliated by Apache and was made accessible to users as an open source. Apache Airflow was developed by Airbnb technical engineers, aiming to manage internal workflows in a productive way. The proposed approach will help application developers to conquer this challenge.Īpache Airflow is a cutting-edge technology for applying big data analytics, which can cooperate the data processing workflows and data warehouses properly. Due to 4V nature of big data (Volumes, Variety, Velocity and Veracity), it is required to build a robust, reliable and fault-tolerant data processing pipeline. it requires specific knowledge to handle and operate the workflow properly. This process involves a series of customized and proprietary steps. Big data analytics is an automated process which uses a set of techniques or tools to access large-scale data to extract useful information and insight.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed